因為比較少找到詳細的中文說明

因此打算自己動手把部署過程記錄下來包含從AWS登入開始含圖文(申請AWS帳號跳過介紹)

1.登入AWS

開啟AWS首頁點選登入主控台

進行登入

2.Lunch 3 instance.( for one name node, two data node.)

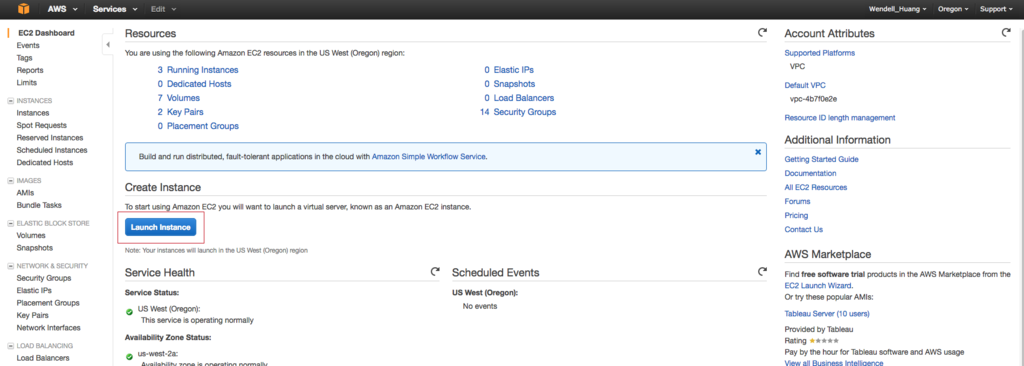

選擇EC2 Service

選擇Lunch Instance

選擇Ubuntu AMI(Amazon Machine Image)

選擇預設機器規格(free trial)

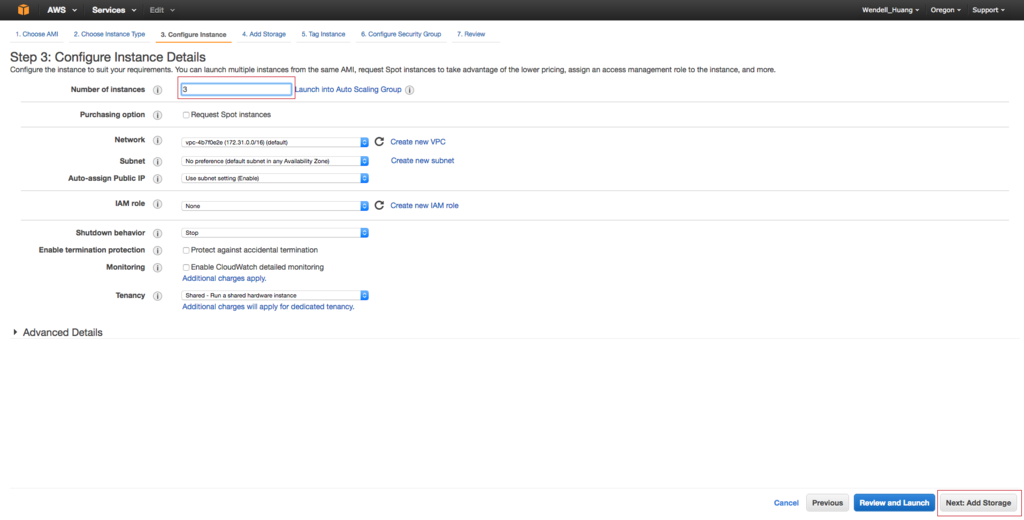

選擇一次建立3個instance

儲存體的選擇預設8GB即可

此步驟可以跳過

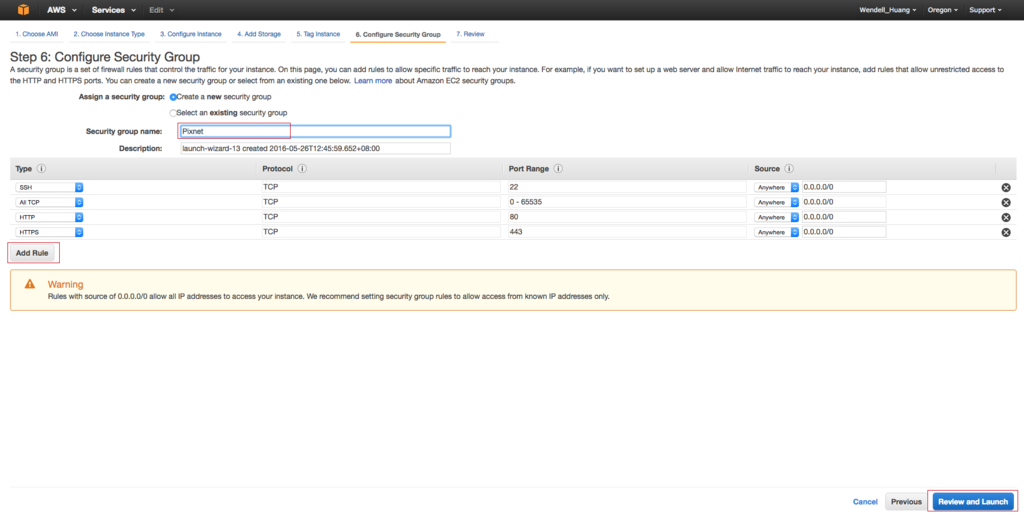

安全性群組請依照新增規則即可

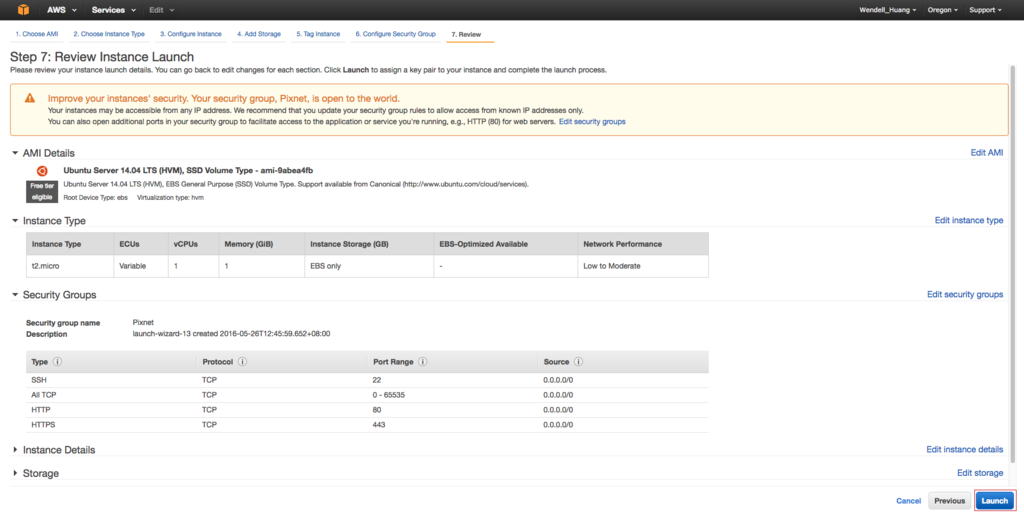

可略過(提供確認設定部份)

新增一組登入ssh key

下載登入ssh key , 並修改權限 chmod 700 ~/Download/pixnet.pem

完成新增Machine in AWS

3.rename server1, server2, server3.

4.ssh login

mv ~/Downloads/pixnet.pem ~/.

取得連線字串

複製連線字串並登入

5.common action( doing for each marchine )( 每一台機器都需要進行的操作)

安裝open-ssh

安裝java

- (sudo add-apt-repository ppa:webupd8team/java)

- (sudo apt-get update)

- (sudo apt-get install oracle-java8-installer)

- when you finish, you will get the result

- check java version

建立hadoop 群組

sudo addgroup hadoop

建立hduser

sudo adduser --ingroup hadoop hduser

編輯/etc/hosts

sudo vim /etc/hosts

關閉ipv6

sudo vim /etc/sysctl

add the following content to end of file

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1

6.Install hadoop in master(Name Node)

Generate ssh key

gererate ssh key as follow command.

ssh-keygen -t rsa

cat $HOME/.ssh/id_rsa.pub >> $HOME/.ssh/authorized_keys

download hadoop 2.6.4

-

cd /usr/local

-

go to http://www.apache.org/dyn/closer.cgi/hadoop/common/hadoop-2.6.4/hadoop-2.6.4.tar.gz and select one to download . sudo wget http://ftp.twaren.net/Unix/Web/apache/hadoop/common/hadoop-2.6.4/hadoop-2.6.4.tar.gz

-

sudo tar xzvf hadoop-2.6.4.tar.gz

-

sudo mv hadoop-2.6.4 hadoop

更改owner

sudo chown hduser:hadoop -R /usr/local/hadoop

建立tmp folder for hadoop

sudo mkdir -p /usr/local/hadoop_tmp/hdfs/namenode

sudo chown hduser:hadoop -R /usr/local/hadoop_tmp/hdfs/namenode

hadoop configuration setting

- cd /usr/local/hadoop/etc/hadoop

- vim core-site.xm

- vim hdfs-site.xm

l

- vim yarn-site.xml

- cp mapred-site.xml.template mapred-site.xml && vim mapred-site.xml

- edit env - vim ~/.bashrc ( 加在結尾 從HADOOP ENVIRONMENT 開始 )

- vim hadoop-env.sh {JAVA_HOME}

- copy master ~/.ssh/id_rsa.pub to slave1, slave2, slave3 ~/.ssh/authoriezd_keys

- copy hadoop folder to slave1, slave2 /usr/local

7.Install hadoop in slaves(Data Node)

- mkdir -p /usrl/local/hadoop

- chown hduser:hadoop /user/local/hadoop

- scp -r hduser@master:/usr/local/hadoop/* hduser@slaves:/usr/local/hadoop

- su - hduser

- vim ~/.bashrc( repeat edit env - vim ~/.bashrc)

- vim /usr/local/hadoop/etc/hadoop/slave - add slave1 slave2 slave3

- create datanode tmp folder - sudo mkdir -p /usr/local/hadoop_tmp/hdfs/datanode

- change /usr/local/hadoop_tmp folder owner into hduser:hadoop

8.Format NameNode format

- cd /usr/local/hadoop

- hdfs namenode format

- start-dfs.sh

- start-yarn.sh

9.Check Status

- on master run jps

留言列表

留言列表